Introduction

Have you ever wondered how does your smartphone or your PC can run multiple apps so seamlessly without freezing? Or how does a server manages to handle requests from thousands of users simultaneously? The answer to these questions lies in the fundamental concepts of concurrency, parallelism and multitasking. While these terms are used interchangeably at times, I wanted to clarify them in depth, explain their differences and how they are used in modern software development.

I will make sure to revisit this post in the future and continually keep editing as I refine the quality of my writing and the content itself.

What is Concurrency?

Definition

Concurrency is the ability of a system to handle multiple tasks or processes at the same time, but not necessarily simultaneously. Instead, tasks are broken down and managed so that they appear to run at the same time, even if they aren’t.

Simpler explanation

To understand this simply, let’s take a real world scenario. Imagine a chef in the restaurant is tasked to prepare three different dishes at the same time. The chef can work on one dish at a time (aka serially) and that could result in dishes getting prepared slowly and leaving customers frustrated in that process. The more human option in this case is to parallely work on all the three dishes at once. More logically, the chef can break down the work for these dishes into smaller steps and prioritize the work based on the preparation needed for the dishes (ex. cut and peel the onions, kneading the dough for the pizza and so on). Next the chef can work on the dish 1 for a bit, jump to dish 2, back to dish 1 and then move to dish 3 and so on. This gives the illusion that progress is being made at the same time but in reality the chef is moving across the tasks across the dishes really quickly.

This is the what the concept of concurrency is really is. Usually programs need to process multiple tasks that requires processing user inputs, reading files, making network requests etc In that case, the CPU will rapidly switch between each task via context switching across multiple programs running simultaneously giving the illusion that tasks are handled simultaneously. However, this context switching comes with overhead as the CPU needs to save the state of each task and restore a different one and excessive context switching can cause performance impacts. I will explain this in a future blog post.

Common Real World Use cases

- Handling multiple user requests on a web server

- Handling asynchronous events in a event-driven programming model

Parallelism

Definition

Parallelism is a subset of concurrency where tasks are literally being run at the same time, typically on separate processors or cores.

Simpler Explanation

This where is the tasks are indeed handled parallely. If we compare this to our restaurant example from before, we can think of two chefs working together on multiple dishes at the same time. A single chef can be baking multiple batches of cookies in different ovens at the same time. That’s parallelism. In the context of computer science, parallelism excels in heavy computations like data processing, rendering graphics, ML model training across multiple cores or machines.

Common Real World Use Cases

- Data Processing: Large datasets can be split into smaller chunks and processed in parallel (e.g. MapReduce).

- Image or Video Processing: Rendering frames of a video on different processors at the same time.

- High-Performance Computing (HPC): Large scientific simulations run on supercomputers using parallelism to solve complex problems (e.g., climate modeling).

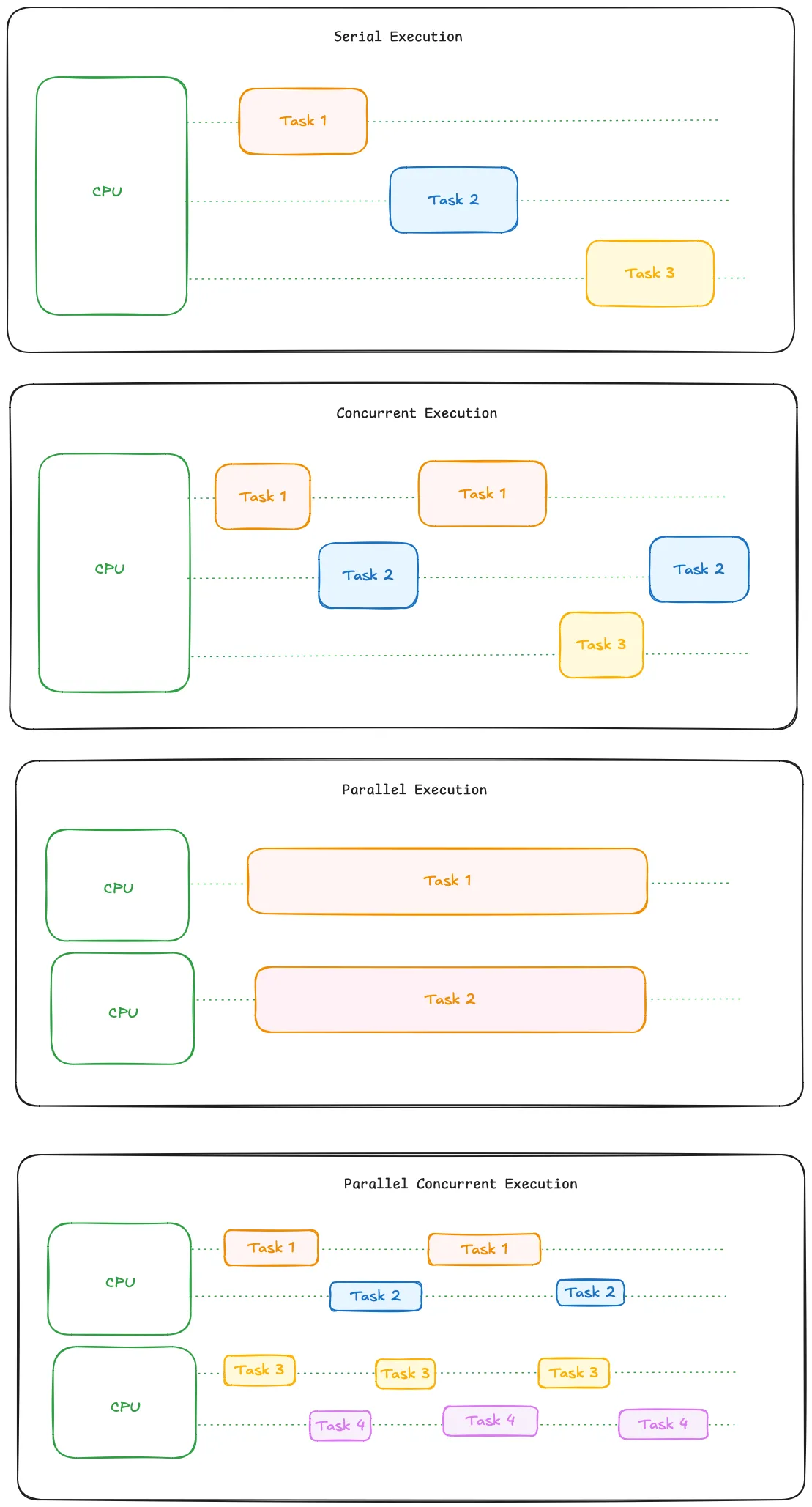

The diagram here illustrates the different combinations of serial, concurrent, parallel and concurrent parallel tasks handled by the CPU

Multitasking

Definition

Multitasking refers to the ability of an operating system to manage multiple tasks simultaneously, switching between them so rapidly that it appears all tasks are being handled at once.

Simpler Explanation

On a computer, one can run a word processor application like Notepad and also be running a Web Browser at the same time. The operating system manages this by rapidly switching between the tasks. Again coming back to our restaurant example, the chef can answer a phone call while cooking - the person would switch between the tasks quickly but can only focus on one thing at a time. Multitasking is often implemented using Concurrency techniques and doesn’t necessarily involve parallelism (you can multitask on a single core CPU).

Concurrency vs. Parallelism vs. Multitasking

Direct Comparison:

Concurrency : Handling multiple tasks in an overlapping manner without necessarily executing them simultaneously.

Parallelism: Executing multiple tasks at the same time, often on multiple processors/cores.

Multitasking: An OS-level feature that allows multiple tasks to share CPU time, appearing to run simultaneously on a single processor.

Key Differences:

Concurrency can be achieved on a single-core processor by interleaving tasks (it’s more about managing time efficiently).

Parallelism requires multiple cores or processors and focuses on real simultaneous execution.

Multitasking allows for multiple applications or tasks to run concurrently, but it doesn’t guarantee that tasks are running in parallel.

Challenges in Concurrency and Parallelism

Concurrency Challenges:

Race Conditions: When multiple threads or processes try to modify the same data concurrently, leading to unexpected results.

Deadlocks: When two or more tasks are waiting on each other to finish, causing a system freeze.

Synchronization: Ensuring tasks don’t interfere with each other’s state.

Parallelism Challenges:

Overhead: Managing multiple tasks can introduce overhead (task splitting, communication between tasks).

Scalability: Parallelism only helps so much — there’s a point where adding more cores doesn’t improve performance.

Load Balancing: Distributing tasks evenly across processors can be challenging.

Conclusion

Understanding concurrency, parallelism, and multitasking is crucial for anyone involved in developing high-performance applications. As you build more complex systems, you’ll need to decide when to use concurrency, when to take advantage of parallelism, and how to manage multitasking in your operating systems. In further edits, I will also dive into Multiprocessing and Multithreading concepts.